Here at CodinGame, we want what’s best for developers and the organizations looking to hire them. We believe that fair, effective (and cool ?) screening tests are essential to any company’s recruitment process.

We truly want to make a difference in tech hiring, which is why we’re forever trying to improve our solution. Last Autumn, for example, we unveiled our new “gamified” coding tests. This time around, we’re reinventing our scoring system!

Whether you’re an existing client or simply curious, you’ll find the lowdown in this article. Read on to find out how to benchmark developers’ technical skills with CodinGame’s comparative score.

We hope to answer all of your questions. If not, you know where to find us!

From the point score to the comparative score

Companies looking to hire skilled developers turn to our solution, CodinGame Assessment, to assess candidates’ technical skills before hiring.

How does CodinGame Assessment work, in a nutshell?

First, you create a tailor-made technical test for your candidates (you can use questions from our vast library of coding exercises or create your own custom questions).

Then, you invite candidates to take your test, as part of your recruitment process. Candidates try their hand at a CodinGame technical test, with real-life coding exercises and gamified puzzles.

Once they’re done, you (the recruiter/hiring manager) receive a detailed test report. This test report is precious: it’s the key to skill-based recruitment. The report includes all kinds of information that you can lean on to make an informed hiring decision (score per language, score per skill, time per question, success rate, etc.)

What’s the big change?

Until now, the default score displayed on CodinGame test reports was the standard point score. This is no longer the case. The comparative score is the new default score.

What’s the “standard point score”?

The standard point score is a basic score based on candidates’ test answers.

If a candidate provides a correct answer, he or she earns points. The total amount of points scored for a test is a candidate’s point score. We simply divide this score by the total number of available points for the test and multiply by 100 to obtain the percentage score displayed on our test reports.

Note: candidates can also earn points for a partially correct answer.

What’s the “comparative score”?

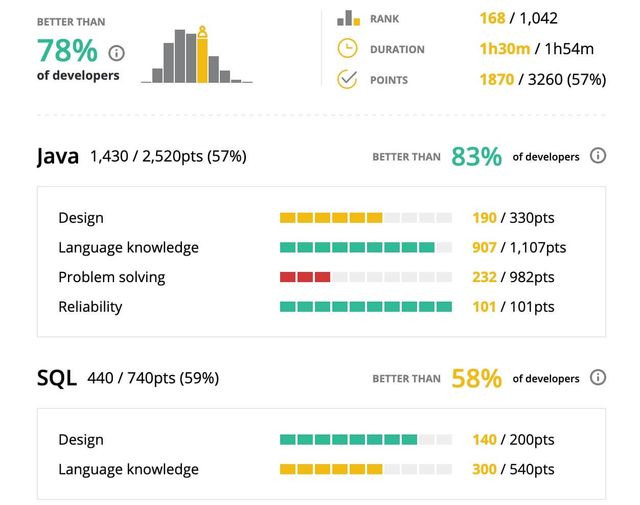

The comparative score doesn’t tell you how well a candidate did in his or her test, but rather how well they did compared to other developers. We make sure this comparison is fair by only comparing scores on the same questions or (if data is scarce) questions with the same difficulty score.

Thanks to our vast community of developers and the Artificial Intelligence behind this score, we’re able to rank developers against other potential candidates. We tell you if a candidate did better than 90% of developers, 75% of developers, 40% of developers, etc.

Those of you who have been with us for a while (*Hey friends! ?*) may be wondering “What’s new?”. Yes, the comparative score existed before (as a secondary score). However, today marks a shift in mindset: an innovative approach to tech hiring.

The reasons behind this change

We’ve made this shift for various reasons. Mainly:

Easier test interpretation

We wanted to adjust our scoring system so as to make candidate benchmarking easier and clearer for recruiters.

As the standard point score varies depending on campaign difficulty, it can prove quite tricky to objectively compare candidates’ skill level. For example:

If Bob was to score 30% on a relatively easy coding test, and John was to score 30% on a difficult coding test, they’d both have the same standard point score. However, this doesn’t mean that their skill level is comparable.

Our new comparative score helps clear up any possible confusion, benchmarking candidates in regards to their developer peers.

Faster results

The comparative score also allows you to start testing and benchmarking developer candidates from the get go.

For the standard point score to give any real idea of skill level, you need to dispose of a cluster of existing candidate evaluations (companies even turn to their own tech team to make up the numbers). You need to be able to compare collected results so as to benchmark candidates and make the right hiring decisions.

With the comparative score, it’s much simpler!

Only have one candidate to assess for the time being? No problemo! We’ll automatically position your candidate in regards to developers who’ve taken a similar test.

Fairer recruitment

We strongly believe that the comparative score provides for a more reliable reflection of reality: a true representation of candidates’ skills.

Skill-based hiring is about helping developers to showcase their technical skills – no matter their educational, ethnical or professional background.

We wouldn’t want any candidate to be unfairly disregarded. For example:

A candidate that scores 30% on a difficult campaign may be considered less than average, and put to one side. However, if the comparative score on the same campaign shows that the candidate has obtained a better score than 90% of developers, then they’re sure to get a look in.

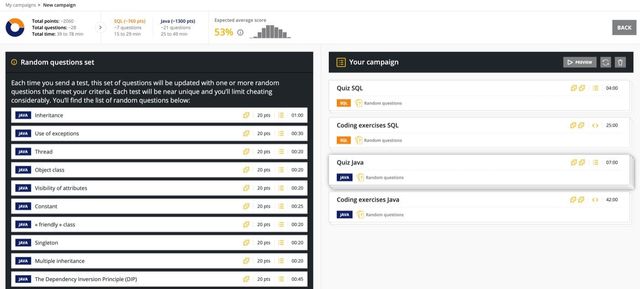

What’s more, our comparative score makes it much easier to use our new random question sets – considerably limiting any risk of cheating.

Another new feature? Yes, sir! You can now create campaigns made up of completely random questions (just set your difficulty level and the programming languages you wish to evaluate). No test is the same. This makes cheating near impossible and really brings the comparative score into its own!

Innovative and global skill-based hiring

We’re looking to make a global difference and bring tech recruitment forward.

Our new comparative score is innovative and technically original. We’re proud to be the first industry players to allow our clients to position their candidates according to a global benchmark.

As the international tech talent market continues to bustle, we’re convinced that a global comparative score will prove to be a true asset to anyone recruiting developers.

The comparative score: the technical lowdown

It took our tech team a long time to perfect the Artificial Intelligence behind our comparative score.

In a nutshell, the comparative score can be computed in two different ways (depending on how much “real” data we’ve been able to collect).

- Using “real” question success scores (based on the analysis of real-life candidates’ tests, scores and feedback)

- Using automated question success scores (based on question difficulty and statistical analysis)

Maxime, full-stack developer at CodinGame, explains:

“Our comparative score is computed by calculating the expected distribution of scores for an assessment campaign.

To do this, we build a statistical distribution of the expected scores for a given campaign based on the success rate of the questions in said campaign (which is based on all candidates’ results, all clients combined).

When a candidate takes a test, they’re ranked against this distribution, which gives their comparative score. This model is automatically refined over time and as we collect more candidate results.”

When should you expect to see these changes?

It’s already here!

We’ve taken the plunge. The comparative score is now the new default score.

Our existing customers can still choose to back-pedal and revert to the old scoring system. However, we highly recommend using the comparative score to benchmark coding skills.

Why now?

We’ve been umming and ahhing about making this change for a while. First, we wanted to perfect the algorithm that the comparative score is based on. Second, we wanted to make sure that it was in our clients’ best interests.

Today, we’re confident that now is the right time.

The comparative score has proved its efficiency, popularity and value to clients. Indeed, in a recent customer survey that Jerome (our Product Manager) sent out, 89.9% of respondents told us that they already take the comparative score into account when analyzing candidate results. What’s more, 76.3% of clients consider that the comparative score is more important than, or just as important as, the standard point score.

On another note, we also believe that now is the time to reinforce our anti-cheating policies.

We’ve always closely monitored cheating and done everything we can to avoid it. Thankfully, only 9.4% of our clients have experienced cheating issues. Clearly, we want to nip this issue in the bud: switching to a comparative score will reinforce our efforts and make cheating near impossible.

Your next steps

So, what now? Well, it’s time to try it out for yourself!

If you’re not yet a user of our tech hiring platform, why not get in touch to find out more? Or simply start a free trial and take a look for yourself.

If you’re an existing customer, simply hop over to your CodinGame for Work workspace and create a new campaign. Follow the usual steps, then pay special attention to your list of questions. Check out the question sets marked “Random questions”.

Once your campaign is underway, you’ll start receiving test result reports and you’ll be able to see our new and improved comparative score in action! If anything is unclear, you can always seek help from our friendly support team. Simply click on “Get help”.

If you only remember two things

This was a rather long and technical article. If you only remember two things, remember this:

- Until now, the default score displayed on CodinGame test reports was the standard point score. The comparative score is the new default score for all test reports.

- The comparative score presents several advantages. Above all, it makes it easier to evaluate and benchmark developers’ technical skills (a fair, reliable representation of developers’ skill level, compared to other developers in their field).