This article was written by both Magus and Agade.

If you often hang out on CodinGame’s chat, you’ve most likely already seen a talking chatbot. Otherwise, join the world chat and type

Hello Automaton2000

Automaton2000 should then answer you. Automaton2000 is a chatbot; it generates sentences when you talk to it. Its little brother, AutomatonNN, does the same, but with a different algorithm.

CodinGame and Its Chatbots

Chatbots have been on CodinGame pretty much since CodinGame had a chat.

CodinGame’s first chatbot was called cgbot and dates back to 3 years ago. It was created by a developer from CodinGame, based on an open-source project in Python. Unfortunately, cgbot was written for the older IRC protocol and was never ported to XMPP when the chat was updated.

There are several chatbots on CodinGame’s chat. Two bots which are present almost all the time:

- Automaton2000

- AutomatonNN

And two other bots which visit on occasion:

- Neumam (Agade’s python Neural Network or Automaton2000 C++-port)

- Agade_fr (Neumann’s bot using Automaton2000’s javascript code with log subsets)

Ironically, the very first version of Automaton2000 is actually a personal IRC chatbot project unrelated to CodinGame. The first version of Automaton2000 as a chatbot on CodinGame was based on this original code.

Why Automaton2000?

In the Starcraft universe, Automaton2000 is a useless robot with no purpose other than to be ornamental.

Automaton2000

Automaton2000 was coded in NodeJS using the simple-xmpp library. To generate a sentence, Automaton2000 builds a Markov chain based on all the messages in the logs of the chatroom. Automaton2000 can be seen as a structure of 3 modules:

- A module which listens to the chatroom and logs all messages

- A module which builds the Markov chain from the logs

- A module which generates a random sentence using the Markov chain

Logging All Messages

This part of Automaton2000 doesn’t use any particular algorithm. It merely logs every message into files.

If you want to develop your own chatbot one day, here are some tips.

First, store all available information, even if you don’t currently plan on using it. Automaton2000 copies each message in the form:

(09:04:47) Magus : Hello everyone !

The timestamp and username are information which Automaton2000 does not currently use, but could be useful.

Second, don’t forget to have an automatic reconnection scheme. Otherwise you’ll waste a lot of time restarting your bot manually, as it could lose connection with the chat for many different reasons (connection loss, chat server reboot…).

And last, write a service that can easily extract information from the log files.

Building the Markov Chain

A Markov chain is simply a directed graph with weighted edges linking states with each other. Each edge represents the probability of moving from one state to another. In the specific case of the Automaton2000, we’re using the logged messages to create our Markov chain: Each word is a node, and each edge represents the probability that Automaton2000 says both words one after the other.

To build the chain, the sentences are cut into several parts (one word per part). For example, the sentence “Hello coders of the wild!” will be cut into the following parts:

__START__, Hello, coders, of, the, wild, !, __END__

These parts are then used to extend and improve the chain. The Markov chain is stored in a variable and completely rebuilt from all read chat logs when the bot starts.

There are two cases in which a word should be added in the chain:

- Either the word doesn’t exist in the chain, and then a new node is created, or

- The word already exists in the chain and only the edge between the word and the one preceding it in the original sentence will be updated.

The edges between the nodes contain only the number of times two words have been seen one after the other. For example, Hello could have been followed by everyone 30 times, world 42 times and __END__ 53 times. These are the values used in the algorithm that generates the new sentences.

An issue with nodes representing one word only was that Automaton2000’s sentences were quite chaotic. Thus, we had to introduce the notion of “state history” into the Markov chain. Concretely, it means that to determine one next word, we’ll use 2 or more words. The algorithm becomes way more complex as we increase the number of edges between the nodes.

If you use a history of 2, the nodes now contain 2 words instead of one. You won’t have just one node with Hello, but several nodes: Hello everyone, Hello world and Hello __END__.

You can imagine the consequences in the number of nodes and edges of the graph in the Markov chain.The first version of Automaton2000 couldn’t take into account a history of 2 words, else it would take all the server’s RAM.

Generating Random Sentences

Generating a random sentence is done directly by using the Markov chain. The algorithm starts at the __START__ node and moves on the graph until it reaches the __END__ node. Nodes are chosen randomly using the probability of each edge (and the history).

It may look simple, but there are indeed hidden traps along the path.

The Markov chain is not acyclic so the first trap to avoid is the infinite loop. In the same vein, the algorithm should not generate sentences that are too long. To avoid this, Automaton2000 has two limits on the number of words used. Once it reaches the first limit, its algorithm will start to favor the __END__ node as next node, to force the sentence to come to an end. After the second limit is reached, the sentence is directly cut. It prevents Automaton2000 from writing sentences with hundreds of words.

On the opposite end of the spectrum, the algorithm should avoid sentences that are too short as well as empty sentences. The algorithm reduces the probability of the __END__ node as long as some limit is reached. Technically, an empty sentence is possible, but the algorithm has a fail-through to avoid it.

Finally, to avoid stupid things, the chatbot shouldn’t take into account its own sentences. It should not learn from them and certainly should not answer them. Else, you risk having a chatbot that talks to itself for several hours.

Two Years Later

This first version of Automaton2000 worked well for approximately 2 years. Unfortunately, after two years, the Markov chain had become too big, and it was impossible to store it in RAM (Automaton2000’s process was exceeding 3 GB of RAM). Because of this, Automaton2000 was silenced. It was still storing the receiving messages but couldn’t generate any new sentences.

So before Automaton2000 could speak again, a solution had to be found.

The C++ Version

The first attempt to resurrect Automaton2000 was coded entirely in C++, inspired by the first version in NodeJS. It’s easier to control memory usage in C++. Plus, a system to clean the Markov chain was put in place: links with very low probability were regularly deleted, as well as nodes without any links.

But this version had two drawbacks.

First, it required the installation of gloox, a C++ library to handle XMPP protocol, and which needed to be compiled with a specific version. And second, it required a very recent version of g++. These peculiarities made the use of the C++ version quite difficult on different machines. The server that hosts Automaton2000 isn’t up-to-date, so it was impossible to get the last version of g++. Plus it wouldn’t work on Windows…which can be quite annoying.

Because of this, this version never got deployed on the server and so was dropped.

Other Leads

We explored other options, but couldn’t come to a successful working version. We had the idea to only take into account messages from the X previous months and not the whole history. Which could have worked, but it would have been a bit sad. We also tried to store the Markov chain on the hard drive in multiple files to prevent it from directly impacting the RAM. Storing the chain in a noSQL database like mongodb was another idea.

Some of these leads might have worked with more effort and time…but in the end, a quite different solution was found.

The Final Version

The current version of Automaton2000 is composed of 2 programs. The front, in NodeJS, handles the XMPP protocol and manages the files containing all received messages. The code, in C++, manages the creation of the Markov chain and the generation of random sentences.

Automaton2000’s C++ core has been optimized to use the least amount of RAM possible, but it mainly uses a few tricks. First, it deletes all weak edges of the Markov chain, a trick from the first version in C++, as explained above. Second, it only uses lowercase characters, which greatly reduces the size of the chain. Consequently, Automaton2000 cannot generate a sentence containing an uppercase character… An acceptable trade-off.

As we write this article, Automaton2000’s core uses a history of 3 words to select the next word when generating a random sentence. The Markov chain currently contains 850,000 possibilities, handled with less than 800 MB of RAM. This couldn’t happen with NodeJS only.

The use of NodeJS, though, wipes the drawbacks of C++ (the dependency to a library to handle the XMPP protocol and the management of files) away. It allows Automaton2000 to work on every station with NodeJS and g++ available.

As far as its future goes, this version could eventually become too greedy. In a few years, it probably will. In this case, the last solution would be to use a noSQL database.

AutomatonNN, the Neural Network

A fancier idea to reduce the RAM consumption of Automaton2000 was to use a Neural Network (NN) in place of the Markov chain, which led to the creation of AutomatonNN.

One very attractive possibility of using NNs as chatbots is their ability to take context into account. The NN could then be able to follow the conversation and speak on-topic. Unfortunately, the current implementation does not fully reflect this ideal yet…

To make this first version, I followed this tutorial, which generates Shakespear-esque text. The NN is a “Gated recurrent unit” (GRU), a type of recurrent neural network (RNN) which takes in, as input, characters of sentences one by one and predicts the following character. The recurrent net takes in as input a character (+ the internal state of the RNN) and outputs a probability distribution of what the next character could be.

The RNN is thus trained on a text corpus to predict what follows in the text. In our case, it is trained on the chatlogs in a username:text format. When its name is called, it gets as input “AutomatonNN:” and the following characters are selected randomly following a multinomial distribution on the probabilities outputted by the NN.

By its architecture, it is a bit slow because you have to do a forward-pass through the network for each character in the message. Other architectures function on the level of words, as opposed to this character-level bot. Such a character-level bot is also capable of misspelling (it might be desirable to reproduce common typos and variations) and producing non-words.

Such a RNN has an internal state, a kind of memory, which theoretically allows it to remember important things such as topic and the names of people currently active in the chat, helping it predict what the following text will be. The NN, in principle, learns during training what it should remember. Currently the NN doesn’t seem to take context into account too much. Instead, it seems to mostly make average sentences filled with words like “code,” “contest,” “chat,” “merde” and, on the English channel, “eulerscheZahl.” But the NN has already had some moments, where it, for example, told Madknight he was “legend in chat.”

Training is done on all the chat logs, cut into extracts of approximately 1000 characters, and takes approximately 2 hours on a GTX 1070. Training is done once; AutomatonNN does not learn online during conversations. Its internal weights are fixed and only its internal memory is affected by chat participants. The memory footprint is minimal because the weights of the network represent only about 20 MB per channel.

Many improvements are still possible, such as obtaining more chat logs (e.g., from before Magus started recording on Automaton2000, or just by waiting), measuring response quality better with the BLEU metric and being able to stop training at a better optimum, using a more modern architecture (the current one being a bit of a “Hello world”), using a word-level bot or even using a higher-level “Natural Language Processing” (NLP) framework such as sockeye…

Why Chatbots

Why did we create chatbots? Why didn’t we simply use an existing chatbot?

As with most personal projects: the sheer fun of it. Automaton2000’s only purpose was to be fun. Through its development and its behavior. As you can see, the development of a chatbot allows you to encounter a few interesting problems such as availability, scalability, memory management…

If you want to learn how to build a chatbot with a few lines of Python, check Maxime’s playground.

If you had to retain one thing from this article, remember that working on personal projects is always useful. Have fun with coding. And you’ll keep the passion for programming.

Automaton2000 and AutomatonNN Anthology

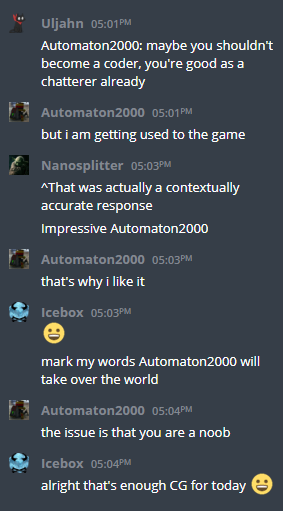

Here below are a few fun excerpts from conversations with the bots (often translated from French).

Silmaen 03:46PM

AutomatonNN say turtle!

AutomatonNN 03:46PM

turtle turtle turtle turtle turtle turtle turtle turtle turtle turtle turtle turtle turtle turtle

Stilgart 03:48PM

So…

Did AutomatonNN really looped on turtle?

AutomatonNN 03:48PM

yes

Stilgart 03:49PM

Or maybe they cheated

Razovsky 03:49PM

Of course they cheated

AutomatonNN say turtle!

AutomatonNN 03:50PM

Hello everyone and Automaton2000

Bob 04:55PM

spam push FTW

do you spam push, Automaton2000 ?

Automaton2000 04:55PM

Not taking several samples?

Bob 04:55PM

ok as you wish

you choose Automaton2000

Automaton2000 04:55PM

gg Aveuh by the way ^^

Bob 04:56PM

are you kidding Automaton2000, he’s not even gold

MooMooN 04:56PM

yes but is automaton2000 gold?

Automaton2000 04:56PM

he won’t be for a few ranks

Automaton2000 01:30PM

Magus is a blunder

[CG]SaiksyApo 02:17PM

Stop spamming Automaton2000

Automaton2000 02:17PM

Nope

Magus 02:27PM

I’m your creator Automaton2000, respect me !

Automaton2000 02:27PM

You have given me grief at each push_back

Automaton2000 05:52PM

let me see Neumann so that we test each other mutually

Neumann 05:53PM

uh…

Magus 05:53PM

wow wow wow

Agade 05:53PM

wow

Automaton2000 10:10AM

you are all idiots